AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

How to install apache spark on linux10/31/2022   On Spark Web UI, you can see how the Spark Actions and Transformation operations are executed. Spark-shell also creates a Spark context web UI and by default, it can access from Spark Web UIĪpache Spark provides a suite of Web UIs (Jobs, Stages, Tasks, Storage, Environment, Executors, and SQL) to monitor the status of your Spark application, resource consumption of Spark cluster, and Spark configurations. Make sure you have Python installed before running pyspark shell.īy default, spark-shell provides with spark (SparkSession) and sc (SparkContext) object’s to use. In order to run PySpark, you need to open pyspark shell by running $SPARK_HOME/bin/pyspark.

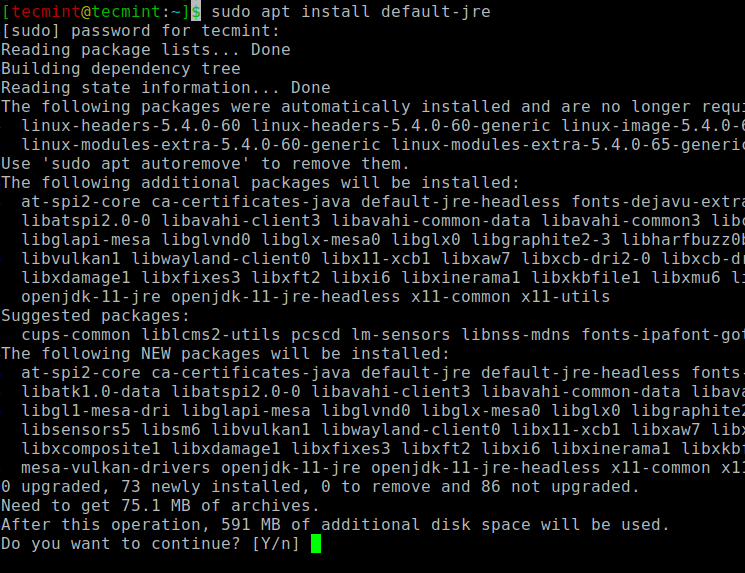

Note: In spark-shell you can run only Spark with Scala. This command loads the Spark and displays what version of Spark you are using. In order to start a shell to use Scala language, go to your $SPARK_HOME/bin directory and type “ spark-shell“. Spark-submit -class .SparkPi spark/examples/jars/spark-examples_2.12-3.0.1.jar 10Īpache Spark binary comes with an interactive spark-shell. You can find spark-submit at $SPARK_HOME/bin directory. Here I will be using Spark-Submit Command to calculate PI value for 10 places by running .SparkPi example. Now let’s run a sample example that comes with Spark binary distribution. With this, Apache Spark Installation on Linux Ubuntu completes. profile file then restart your session by closing and re-opening the session. Now load the environment variables to the opened session by running below command :~$ source ~/.bashrc open file in vi editor and add below variables. Once untar complete, rename the folder to spark.Īdd Apache Spark environment variables to. #How to install apache spark on linux archive#Once your download is complete, untar the archive file contents using tar command, tar is a file archiving tool.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed